Improving Visual Experience With AMD FreeSync / Nvidia G-Sync

The GPU is responsible for creating whatever is displayed on the screen, but when the monitor and the graphics card do not “run” at the same rate, the output is of reduced quality. Here is how Nvidia G-Sync and AMD FreeSync technologies overcome specific technical limitations with the purpose of improving graphics quality.

- How animations work

- How is the GPU’s frame rate different?

- Monitor refresh rate

- Visual issues when the refresh rate is not synchronized with the produced FPS

- Methods for improving graphics

- FreeSync or G-Sync?

How animations work

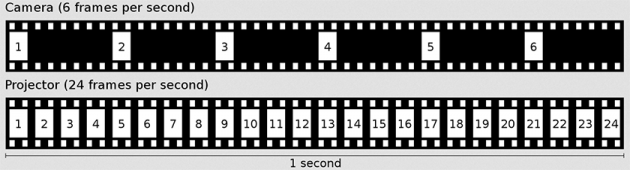

As you probably know, every screen animation is actually a sequence of static images. These images alternate quite quickly so that the brain does not perceive the difference, and just sees a continuous animation.

Each individual static image is called a frame, while the frequency at which consecutive frames are displayed is called frame rate and is expressed in frames per second (FPS).

In cinema, the standard frame rate is 24 FPS. On TV, the European PAL/SECAM standard is 25 FPS, while the US NTSC standard is 23,976 FPS.

There are some other terminologies regarding frames, such as progressive and interlaced formats. However, for the purposes of this article, the simple definition of FPS is sufficient.

How is the GPU’s frame rate different?

In computers, the concept of creating an animated display is exactly the same. The difference, however, is that instead of displaying ready-made images, the graphics card should create each frame in real-time, before displaying it on the screen.

So, while the frame rate is fixed in the cinema and TV, in computers it is changing continuously, and its current value depends on various factors. For example, some games are locked to run at a maximum frame rate of 30 FPS. In most cases however, the basic limitation is the hardware capabilities. In fact, it’s the GPU that plays the most important role in this case, while the CPU comes second.

When playing demanding games, the graphics card has a larger workload because it needs to draw complex images. The more complex each frame is, the more time it will for the graphics card to draw it, and as a result, less frames will be displayed in a second.

The general rule is that the higher the FPS, the better the visual result is. In this website you can see how much smoother the animation looks when the FPS is higher.

Monitor refresh rate

The refresh rate refers to how many times per second the monitor will update its screen contents, and is measured in Hz (Hertz). If a monitor has a refresh rate of 75Hz, it means that within a single second it is able to show you 75 frames.

Most monitors have a refresh rate of 60Hz, which is sufficient for the average user. However, more advanced monitors with higher refresh rates (120Hz, 144Hz, etc.) are available for gamers, with their cost being considerably higher than simple 60Hz monitors.

Visual issues when the refresh rate is not synchronized with the produced FPS

If the FPS that the graphics card can produce is almost the same as the monitor’s refresh rate, then you will have a very smooth visual experience. However, this is not always the case, which causes a number of problems, especially during gaming.

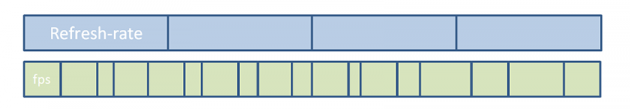

The graphics card constantly changes the rate with which it creates frames. On the other hand, the screen is always refreshed at the same, constant rate. A visual representation would look something like this:

In the positions where there the refresh rate and FPS do not meet, one or both of the following issues occur:

Tearing

Tearing occurs when the graphics card sends one or more new frames while the monitor is being refreshed. This phenomenon results into displaying half of the old frame and half of the new one. It is like your image was cut horizontally into 2 or more pieces.

This is usually the case with systems equipped with powerful graphics cards, since the frames produced exceed the monitor’s refresh rate.

Lagging/stuttering

Lagging, or stuttering, is the exact opposite of tearing. The graphics card is unable to produce a new frame when the screen is being refreshed, so the previous frame is displayed again.

Methods for improving visual experience

Because these problems reduce viewing quality, a number of technologies have been developed to improve graphics, overcoming technical limitations.

V-Sync

V-Sync is a setting that can be found in all modern video games. When you enable V-Sync, it detects the refresh rate of your monitor and "locks" the FPS to that number. With V-Sync enabled, the graphics card will never send more frames than the screen can display at a time. So the tearing problem is eliminated permanently.

However, the way V-Sync works causes a lot of issues. The graphics card waits for the monitor to refresh in order to send the next frame. However, if the frame is not ready at the moment that the screen is being refreshed, it will have to wait for the next refresh. If this happens often, the FPS will be reduced to a fraction of the refresh rate.

To understand the difference, you can see how animation looks like with V-Sync disabled and how it looks like when it's enabled.

Nvidia G-Sync

The solution to V-Sync's problems was first introduced by Nvidia in early 2014 with its G-Sync technology. Instead of trying to adjust the FPS produced by the graphics card, it turned to configuring the monitor refresh rate.

G-Sync is able to control the screen refresh rate, so that each moment it matches the GPU’s produced FPS. The result looks something like that.

Of course, there are limitations for how much the refresh rate can be increased or decreased, and depends on the monitor. While some monitors have very small limits, e.g. 40-75Hz, others have greater limits.

With G-Sync, all of the previously described problems are solved. No more tearing and lagging, since the FPS matches the limits of each monitor. But now, a third problem is created: the monitor needs special equipment to be able to use G-Sync. It must be specially designed by the manufacturer, with an integrated Nvidia G-Sync controller. And guess which one is the only company that produces exclusive equipment for Nvidia G-Sync. Yes, Nvidia.

Monitor manufacturers need to buy special equipment from Nvidia, as well as the necessary license to use this technology, which increases the final cost of the monitors.

AMD FreeSync

About a year after the release of G-Sync, AMD released its own version, namely FreeSync. FreeSync works in a similar way, and the improvements in graphics quality are just as impressive as with G-Sync.

The main difference in FreeSync is that the monitors do not need special equipment created by AMD; FreeSync is based on open-source material, as the word "free" in its name suggests.

The equipment can be manufactured by any company, thus FreeSync monitors are usually more affordable.

FreeSync or G-Sync?

Well, since FreeSync does the same thing as G-Sync and costs less, why choose a G-Sync monitor?

The answer is relatively simple. Each of these technologies only works with the corresponding graphics card. If you have an Nvidia graphics card, you can only use G-Sync. Alternatively, if you have an AMD GPU, only FreeSync will work on your computer.

The only thing that is certain is that this purchase is an investment that will last for several years. Usually, you will upgrade at least once the rest of your equipment before changing monitor, with a few exceptions only. Therefore, choosing between the two technologies practically means "getting married" to one company or another in a future GPU upgrade.

Of course, nothing prevents you from buying a monitor without any of these technologies. In fact, if you are not into gaming at all, then there is no reason to invest in such a monitor.

How to tell if a monitor supports FreeSync or G-Sync?

Unfortunately, a lot of websites do not mention whether a monitor supports FreeSync or G-sync. The only reliable solution in this case is to view this information in the official manufacturer’s websites of the products.

Have you ever used FreeSync or G-sync? Let us know your thoughts in the comments section below!